Sounds and movement have been associated since human thought. An acoustic perception triggers a certain behavior and a movement leads to the generation of sound waves. These two poles develop an interplay between action and reaction.

Both are dependent on each other and can be combined under the concept of rhythm. So there are interactions, but how can they be classified? They are either predetermined or arise spontaneously from temporary emotional states. Each of our movements is unique, yet they are similar in our movements and patterns. Our emotions can be reflected in our movement.

What moves you and what impact does your body language have on your environment and yourself? Are you free to move or conform to our environment? Does your gesture tell you something about yourself or is it just a reaction to what you are doing?

This work presents a media-artistic interpretation of how to shape these questions into an individual experience. The resulting room installation can be enjoyed as a performance tool and experimental sound instrument and is intended to help overcome our self-imposed movement obstacles.

The concept and the implementation of the work came about as part of my Bachelor’s thesis at the University of Augsburg and was supervised by Professor Thomas Rist, Tobias Grewenig and Jürgen Hefele.

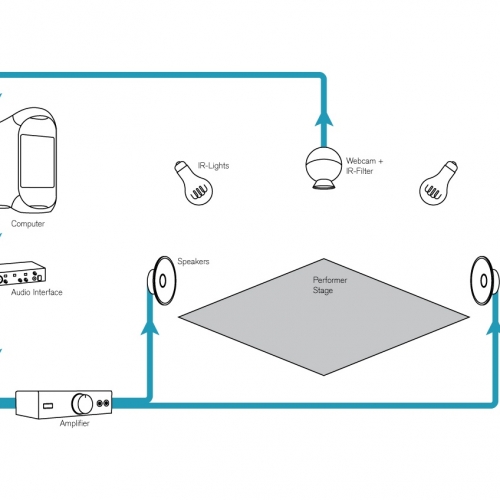

The motion capturing is realized by a self-built visual tracking device out of a modified USB camera with high sensitivity in the infrared range. Other visual ranges were removed by an optical filter.

The software part was implemented in MaxMSP using cv.jit for image analysis. To be able to map the optained data into musical parameter I implemented a three dimensional emotional model which originates in the theoretical work of experimental psychologist Wilhelm Wundt. The thereby received data are processed through the Realtime Composition Library (RTC) provided by Karlheinz Essl.